Microsoft Docs: Create and configure a self-hosted integration runtime.Microsoft Docs: Integration runtime in Azure Data Factory.The following guides are provided for additional reference material and configuration details.įor additional details on installing and configuring and Integration Runtime refer to the below guides: The scenarios where configuration will need to be configured outside of the BimlFlex/BimlStudio environment are listed below.Īn on-premises data source will require the installation and configuration of a Self-Hosted Integration Runtime in order for the Azure Data Factory to have access to the data. There are some environments that will require some additional setup outside of the configuration and installation of BimlFlex. Key points of consideration though are any existing SSIS Dataflow Expressions across Configurations, Settings and Data Type Mappings.Īzure Data Factory has a separate Expression Language from SSIS and as such requires separate logic and configuration. Converting a SSIS ProjectīimlFlex allows for a rapid transition from an SSIS orchestration to an Azure Data Factory orchestration by applying the above settings.

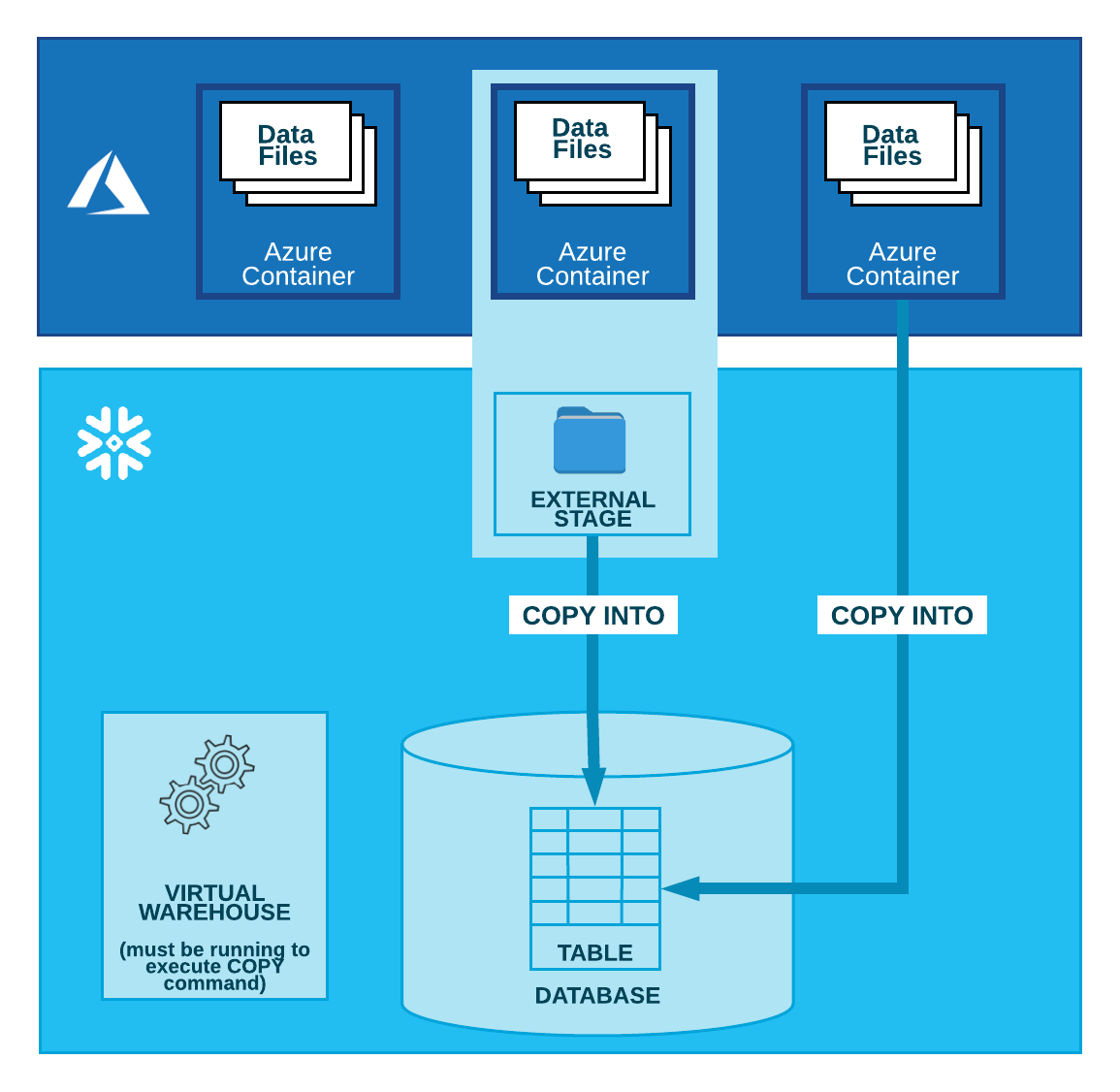

The referenced implementation guides should still be consulted prior to deploying an Azure Data Factory using either platform.Īll Projects required a Batch and at minimum a Source Connection and Target Connection. The following sections outline the specific considerations when Azure Data Factory across various architectures and platforms.Īlthough features are highlight that are Azure Synapse or Snowflake specific, the following articles are only designed to highlight the Azure Data Factory implications. In addition to the scenario given the example, BimlFlex support multiple Integration Stages and Target Warehouse Platforms. Refer to Azure Key Vault and Secrets for details on configurations.Īdditional reading is provided under the Detailed Configuration to highlight initial environment requirements, alternate configurations and optional settings. The example uses an Azure Key Vault with pre-configured Secrets. If accessing an on-premise data source, an Integration Runtime will also need to be configured. If not already done, the Staging Area database would also need to be completed prior to execution of any Azure Pipelines.Īdditional steps for this, and the other Integration Stages can be found in the Deploying the Target Warehouse Environment section below. That concludes the deployment of an Azure Data Factory. These are found inside the \DataFactories\\ directories named. For this scenario, use the Git-integration and the individual Json files generated by BimlFlex to populate the integrated Data Factory.įor additional details on deploying your Azure Data Factory refer to the below guides:īimlFlex will also output the JSON for each artifact individually should they be required for additional deployment methodologies. The designer builds these JSON files from BimlStudio, commits them to the repository and pushes them to origin, allowing ADF to show the artifacts in the Azure Data Factory design environment.įor implementations that use the Serverless Azure Synapse workspace-approach, Git integration is mandated by Microsoft, and ARM template deployments are currently not available for the integrated Data Factory. Using the source control-integrated approach allows the designer to connect the Data Factory to a Git repository, where ADF resources are represented using JSON files. Data Factory json files integrated into a source control repositoryīimlFlex can create both of these output artifacts, allowing the designer to pick the process that works best in the current environment.ĭeploying the environment through the ARM template includes everything required for the environment, including non-ADF resources when applicable, such as the BimlFlex Azure Function used to process file movements and send SQL commands to Snowflake.BimlFlex simplifies the creation of Azure Data Factory (ADF) data logistics processes.ĪDF is the cloud-native solution for processing data and orchestrating data movement from Microsoft, and allows data processing to happen in a cloud environment - minimizing any requirements for local infrastructure.Ī BimlFlex solution that has been configured with the Azure Data Factory Integration Stage consists of the Data Factory, which is used to extract data from sources, and an in-database ELT processing step which loads the extracted data into target staging, persistent staging, Data Vault and Data Mart structures.Īn ADF environment can be maintained using two main methods

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed